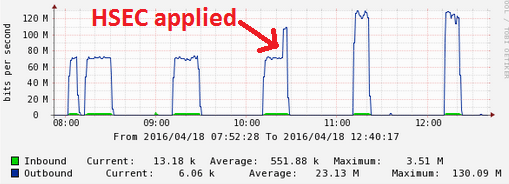

Time to replace the office 2951s with 4351s. Since the Internet pipes are 300 Mbps, we purchased the FL-44-PERF-K9 upgrades, which bump the throughput from a 200 Mbps to 400 Mbps cap. I entered the PAK on the Cisco License Portal, installed the license, but noticed upon testing there was still a 200 Mbps limit. The license commands indicated that the license had been installed, but not enabled/activated.

Router#show license feature

Feature name Enforcement Evaluation Subscription Enabled RightToUse

appxk9 yes yes no no yes

uck9 yes yes no no yes

securityk9 yes yes no no yes

ipbasek9 no no no yes no

FoundationSuiteK9 yes yes no no yes

AdvUCSuiteK9 yes yes no no yes

cme-srst yes yes no no yes

hseck9 yes no no no no

throughput yes yes no no yes

internal_service yes no no no no

The licensing magic trick? Configure the platform to jump from 200 Mbps to 400 Mbps:

Router(config)#platform hardware throughput level 400000 % The config will take effect on next reboot

Upon rebooting, NOW the throughput license is enabled.

Router#sh license feature Feature name Enforcement Evaluation Subscription Enabled RightToUse throughput yes yes no yes yes Router#show platform hardware throughput level The current throughput level is 400000 kb/s